This means that if you're willing to replace tar with 7z, you get identical files deduplicated. The 7-zip archiver runs its own deduplication at the file level (looking at files with the same extension) when archiving to its. However, it is slower than Gzip.Īnother option is 7-zip ( 7z, in the p7zip package), which is an archiver (rather than a single-stream compressor) that uses LZMA by default (written by the author of LZMA). The xz utility, which should be installed by default on most recent Linux distros, is similar to gzip and uses LZMA.Īs LZMA detects longer-range redundancy, it will be able to deduplicate your data here. The LZMA algorithm is in the same family as Deflate, but uses a much larger dictionary size (customizable default is something like 384 MB). Instead, try: LZMA/LZMA2 algorithm ( xz, 7z) Nicole Hamilton correctly notes that gzip won't find distant duplicate data due to its small dictionary size.īzip2 is similar, because it's limited to 900 KB of memory.

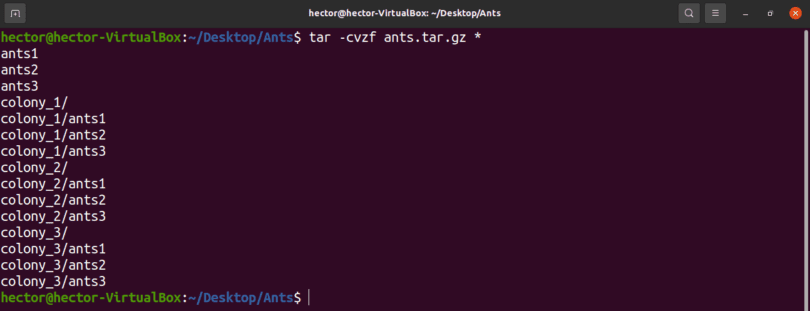

Why is this? And how could I compress the tarball efficiently in these cases? Now I expected gzip to reduce the size of the tarball to ~1MiB since a and b are duplicates, and there should be 1MiB of continuous data repeated inside the tarball, yet this didn't occur. When creating the tarball, tar was apparently aware of the hardlink, since the tarball was only ~2MiB and not ~3Mib. Then I copied it to a file b and also harlinked it to c. rw-r-r- 1 guido guido 2097921 Sep 24 15:51 įirst I created a 1MiB file of random data (a). Hold much hope for either of these, just gotta be careful with that particular mode.I just did a little experiment where I created a tar archive with duplicate files to see if it would be compressed, to my awe, it was not! Details follow (results indented for reading pleasure): $ dd if=/dev/urandom bs=1M count=1 of=aġ048576 bytes (1.0 MB) copied, 0.114354 s, 9.2 MB/s Its no-checksums default or bsdtar/libarchive might override it, though I wouldn't zst everywhere, hopefully either libzstd changes When it's built without libzstd!), -lz4 without lz4:block-checksum option, What DOES NOT provide consistency checks with bsdtar: -z/-gz, -zstd (not even Using one of these as a go-to option seem to mitigate that shortcoming,Īnd these options seem to cover most common use-cases pretty well. It's still sad that tar can't have some post-data checksum headers, but always Less-common/niche wrt install base and use-cases. GNU tar doesn't understand lz4 by default, so requires explicit -I lz4.īsdtar -bzip2 - actually checks integrity, but is very inefficient algoĬpu-wise, so best to always avoid it in favor of -xz or zstd these days.īsdtar -lzop - similar to lz4, somewhat less common,īut always respects data consistency via adler32 checksums.īsdtar -lrzip - opposite of -lzop above wrt compression, but even Same for xxHash32 checksums there, so can be a safe replacement for uncompressed tar.īsdtar manpage says that lz4 should have stream checksum default-enabled,īut it doesn't seem to help at all with corruption - only block-checksums

Lz4 barely adds any compression resource overhead, so is essentially free, % dd if =/dev/urandom of =file.orig bs =1M count = 1 % cp -a file Error 66 : Decompression error : ERROR_blockChecksum_invalid

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed